Table of Contents

Google released Gemma 4 this week. Open-weight, Apache 2.0 licensed, multimodal, scalable from edge devices to enterprise data centers. It is the most accessible enterprise-grade AI model yet released.

And yet, according to Gartner’s 2026 research, 47 percent of companies have zero AI agents running in production.

These two facts are not contradictory. They are the same story.

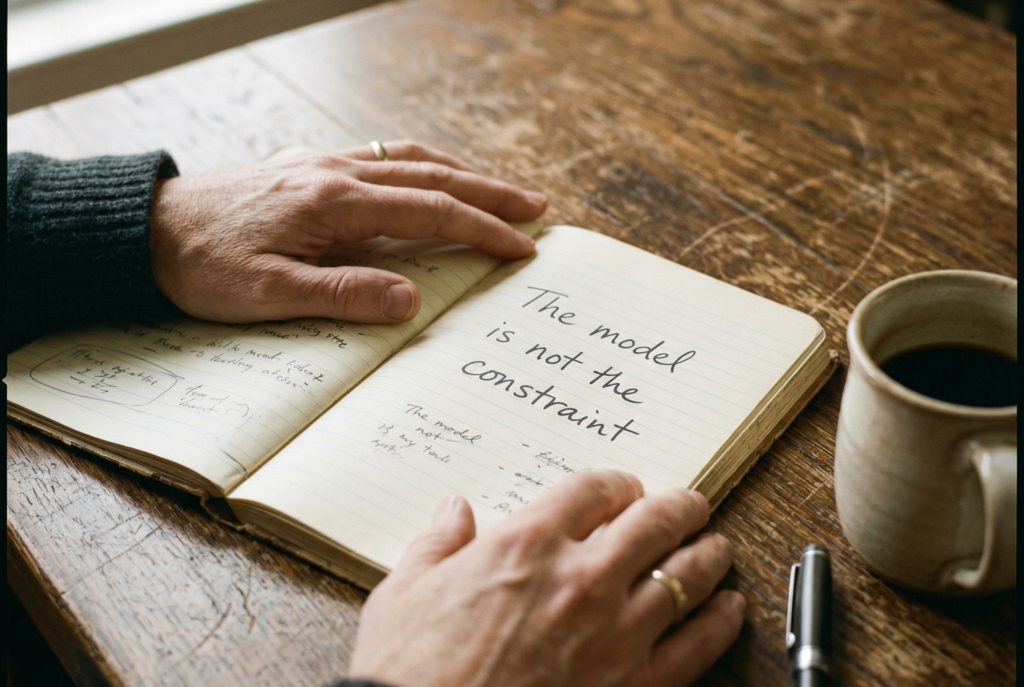

The bottleneck on AI adoption has never been model access. For the past three years, capable models have been available to any team willing to use them. What has consistently blocked deployment is something that no model launch fixes: systems thinking.

This post is for early-stage B2B founders and operators who want to stop experimenting and start shipping. It covers what Google’s Gemma 4 release actually means for small teams, why most businesses are still stuck, and a practical sequence for finding and building your first real AI agent. Not a demo. A system that runs.

What Google’s Gemma 4 Launch Actually Changes

Gemma 4 matters for three specific reasons that are relevant to B2B teams building internal automation.

First, it is open-weight. You can download and run it on your own infrastructure, which means your data does not leave your environment. For early-stage B2B companies handling customer data, procurement workflows, or sales intelligence, this eliminates a meaningful compliance argument against AI-powered automation.

Second, it is Apache 2.0 licensed. Unlike some open-weight models that restrict commercial use, Gemma 4 can be used freely in commercial products and workflows. There is no royalty, no usage limit, and no restriction on building client-facing systems on top of it.

Third, it is multimodal and scalable. It handles text, images, and code across a wide range of hardware, from edge devices to data center inference. For small teams, this means you can run a capable model locally without GPU clusters.

What Gemma 4 does not change: it does not tell you what to build. It does not identify your bottleneck. It does not design your workflow or define what a good output looks like. That part is still on you.

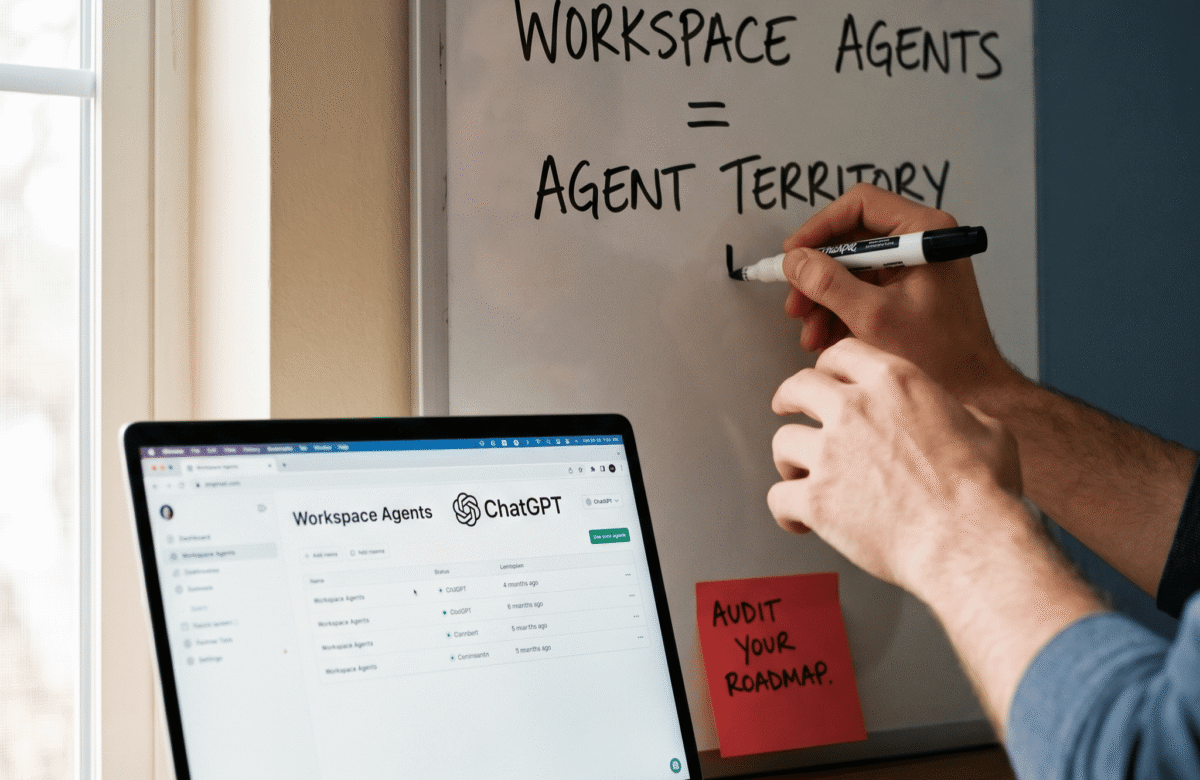

The Gap Between Model Access and Agent Deployment

Gartner’s 2026 data on AI agent adoption tells a clear story. Forty-seven percent of companies have no AI agents running in production. Thirty-two percent have between one and three agents. Only two percent have more than twenty-one agents running.

This is not a technology problem. It is a prioritization and design problem.

Consider a related finding from the same research cycle: sales representatives who use AI tools effectively are 3.7 times more likely than their peers to hit quota. The teams that have figured out where AI fits are seeing compounding returns. The teams that have not are still running experiments.

The pattern that surfaces consistently across early-stage B2B clients: the companies that have deployed real AI systems started with one specific, well-defined problem. They did not start with a tool selection process. They did not start with a prompt library. They started with a workflow they already understood well enough to write down on paper.

The companies still stuck in experimentation mode share a common characteristic. They are evaluating models, building demo environments, and running pilots without committing to a first production use case. Gemma 4 is excellent news for the first group. For the second group, a better model is not the missing piece.

Three Patterns That Keep Teams Stuck

Three patterns surface consistently across early-stage B2B deployments. Each one stalls teams before they ship their first real agent.

Pattern one: starting with the tool instead of the process.

Tool selection feels like progress. Evaluating Gemma 4 versus GPT-4o versus Claude feels like meaningful work. But picking the right model for a workflow you have not yet defined is backwards. The tool selection should take about ten minutes once you know what the system needs to do. Teams that spend weeks on tool evaluation are usually avoiding the harder work of process definition.

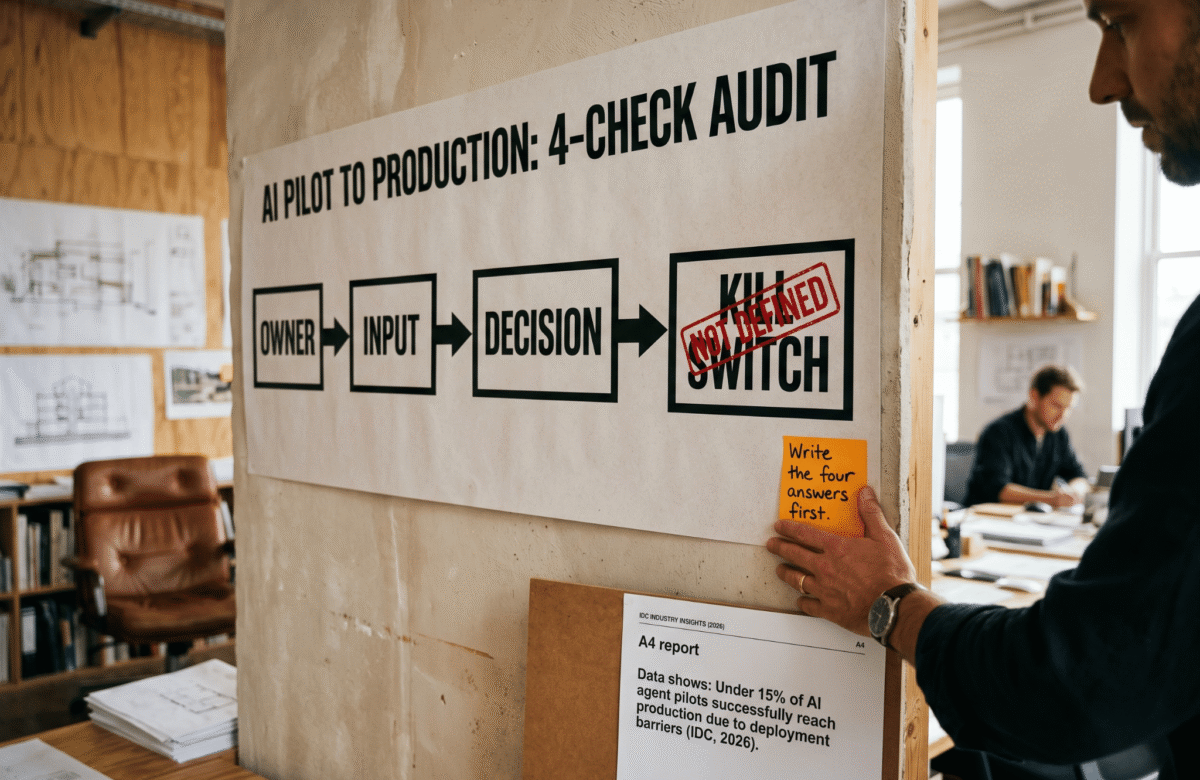

Pattern two: skipping system design and going straight to experiments.

Prompting is not system design. Running tests in a playground environment is not building a workflow. The gap between a prompt that works in a demo and an agent that runs reliably in production is almost entirely a system design gap. Before any prompt is written, the workflow needs to be mapped at the logic level. What are the inputs? What are the outputs? What does a good result look like? What happens when the result is poor? Teams that skip this step produce demos, not systems.

Pattern three: aiming at the impressive use case instead of the real bottleneck.

AI customer service bots are easy to pitch. AI research agents sound compelling in a deck. But the highest-value first deployment for most early-stage B2B teams is usually something less exciting. It is the qualification step that takes 45 minutes per lead and follows the same logic every time. It is the weekly competitive monitoring report someone assembles manually from four sources. It is the proposal customization step that could follow a template 80 percent of the time.

The boring bottleneck is almost always the right starting point. It is boring because it is consistent. Consistent processes are the ones that automate well.

A Practical Sequence for Finding Your First AI Agent

This is the sequence that works for early-stage B2B teams who want to move from experimentation to a running system.

Step one: list operational tasks, not use cases.

Do not brainstorm AI use cases. Instead, write down every operational task your team handles more than eight times per week. Be specific. Not “sales outreach” but “researching a prospect’s company before the first call.” Not “content creation” but “writing a follow-up email after a demo based on notes from that call.”

Step two: score each task on two dimensions.

First, how consistent is the decision logic? If the task follows roughly the same steps every time, it scores high. If every instance requires significant judgment from an experienced person, it scores low. Second, how much time does it consume per week across the whole team? High time plus high consistency equals highest priority.

Step three: define the workflow before touching any tool.

For the highest-scoring task, write out the process at the logic level. What triggers the task? What information does it require? What are the steps? What does a good output look like? What quality checks exist? This document is your agent specification. If you cannot write it, the process is not ready to automate. If you can write it in thirty minutes, you are ready to build.

Step four: run it manually with AI assistance before automating.

Before building any infrastructure, run the workflow yourself using an AI tool interactively. Treat this as validation. Does the AI produce outputs that are actually useful? Where does it fail? What needs human review? This stage catches design problems before they become production problems.

Step five: automate the validated workflow.

Once the manual-with-AI version is producing reliable results, build the automation layer. This is where the model choice matters, and this is the right moment to evaluate whether Gemma 4, a hosted model, or another open-weight option fits your infrastructure and compliance requirements.

One Question to Answer This Week

Gemma 4 being available, open-weight, and free does not change the first step for most teams. The first step is still: identify the right problem.

Here is the single question worth answering this week before any tool evaluation, prompt engineering, or infrastructure planning:

What is one task your team performs more than eight times per week that follows a consistent, documentable pattern?

Write the answer down. Write the workflow down. If you can define the inputs, the steps, and what a good output looks like, you have your first AI agent waiting to be built.

The model is the last decision, not the first. Gemma 4 is a strong option when you get there. But the teams that ship real AI systems are the ones who figured out the problem before they touched the tool.

Gartner’s 2026 data shows 47 percent of companies have no agents running. The gap is not access. It is clarity. Start there.

If you are working through your first production AI deployment and want a framework for the system design phase, reach out at ashikur@lumeneze.com or book a session at calendly.com/ashikurrahaman/lumeneze.