Table of Contents

The AI pilot to production gap is the single biggest tax on early-stage B2B teams this year. Pilots look great in demos. Three months later the agent is quietly off. The founder blames the model. The real culprit is almost never the model.

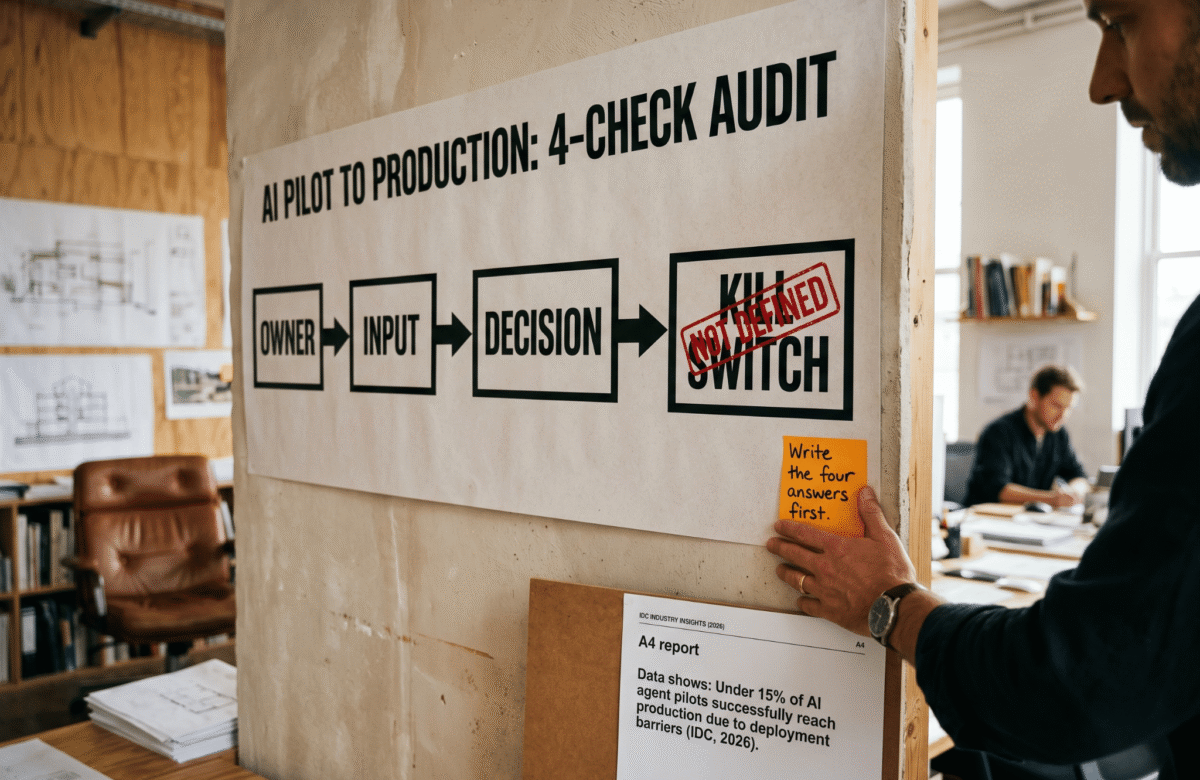

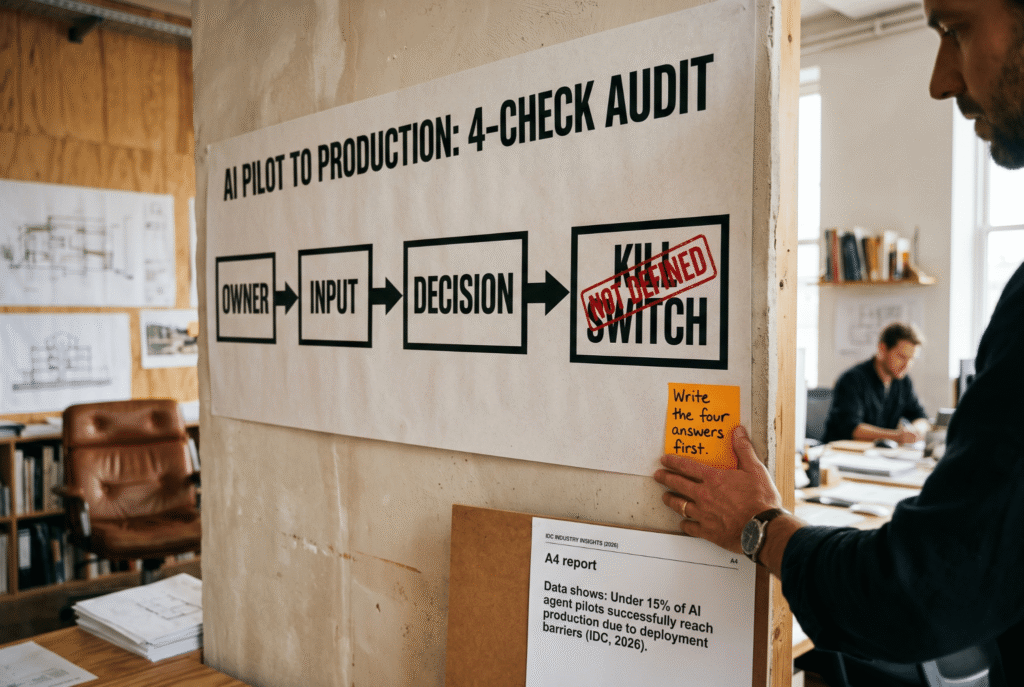

Gartner projects that more than 40 percent of agentic AI projects will be canceled by the end of 2027, citing escalating costs, unclear value, and inadequate risk controls. IDC reporting from early 2026 puts the pilot-to-production conversion rate even lower: fewer than 15 percent of enterprise AI agent initiatives scale beyond the pilot phase. The pattern is consistent across company sizes and stacks.

This piece lays out the 4-check framework used before any agent gets built inside a Lumeneze engagement. Each check takes 10 minutes. Each one kills more bad builds than any platform choice.

Why AI Pilot to Production Keeps Failing

Most teams treat the AI pilot to production move as a scaling problem. It is not. It is a clarity problem. A pilot survives because a single human keeps watch, patches errors, and explains results in the standup. Production has none of that shelter.

In addition, the failure modes cluster into four categories across every audit the team has run in the last six months. Unclear ownership. Dirty upstream data. Agents mimicking decisions instead of making them. Missing shutdown conditions. The same four, every time.

As a result, the answer is not a better model. It is a tighter pre-build audit. The checks below are the ones used at Lumeneze before a single line of automation gets written.

Check 1: Owner (Who Gets the Alert?)

The first question in every AI pilot to production audit is the coldest one: when this agent misbehaves at 2 a.m. on a Saturday, whose phone lights up? If the answer is “someone on the team” or “we will check the dashboard Monday,” the agent is already orphaned.

For example, a common pattern is an SDR team that inherits an AI outbound agent from a growth contractor. No one on the team can read the logs. No one owns the error channel. Within three weeks the agent is silently sending broken messages to half the ICP, and the founder only notices when a prospect replies with a screenshot.

Therefore, assign a named human owner before the build starts. Not a team. A person. That person gets the alerts, reviews the weekly error log, and has authority to pause the agent. Without this, every other check fails downstream.

Check 2: Input (Is the Workflow Already Clean?)

The second check in the AI pilot to production framework is about what the agent eats. An agent is not a workflow designer. It is a workflow executor at speed. If the workflow is ambiguous when a human runs it, the agent will run the ambiguity at 10 times the pace and 10 times the damage.

However, most founders skip the input question entirely. They see a manual process that takes two hours a day, assume it is simple, and hand it to an agent. The handoff exposes every implicit decision the human was making silently: which leads count as qualified, when to skip a step, what the exception rules are.

Consequently, the input check has one rule. Can the workflow be written as a flowchart with named decision points and clean data sources in under 30 minutes? If not, the workflow is not ready for an agent. Fix the workflow first. Automate second. The Gartner research on agentic project cancellation points back to integration complexity and unclean inputs as the top two causes of failure.

Check 3: Decision (Real or Performed?)

Check three is the one that tends to surprise teams. A decision has a consequence. A performed decision has a dashboard. Most “AI decision agents” in production today are the second kind.

Furthermore, the test is simple. If the agent chooses option A over option B, what changes in the business the next morning? A lead gets contacted or not. A ticket gets routed or not. A price gets adjusted or not. A number changes. A human does something different. If nothing changes, the agent is not making a decision. It is generating a summary that a human re-decides anyway.

In contrast, real decision agents earn their cost because the downstream action is tied to their output. According to recent OpenAI enterprise adoption research, the projects that survive to production are the ones where a single named decision is owned end to end by the agent, not the ones that produce narrative outputs for humans to rubber-stamp.

Check 4: Kill Switch (When Does It Turn Off?)

The last check in the AI pilot to production framework is the one founders skip most often. Under what condition does this agent get turned off? Error rate above x percent. Cost per run above y. Complaint volume above z. Named metric, named threshold, named human who flips the switch.

As a result, agents without a kill switch become structural liabilities. They keep running long after the business case expired. The owner moves to a new project. The error channel goes quiet because no one reads it. The agent is now a background noise machine costing money and generating small embarrassments.

Therefore, write the kill switch before writing the first prompt. Two sentences are enough. “This agent is paused if x exceeds y for three consecutive days.” “The named owner is authorized to pause without escalation.” That is the entire clause.

Before and After: What the Audit Changes

Before the audit, a typical B2B team commissions an agent because a competitor has one, a contractor recommends one, or a founder saw a demo on a Tuesday. The brief is vague. The owner is “the growth team.” The input is “our current process.” The decision is “help us be faster.” The kill switch does not exist.

After the audit, the same brief looks different. The owner is one person with name, email, and authority. The input is a 12-step flowchart with five named decision points. The agent owns exactly two of those decisions. The kill switch is “pause if more than four percent of outputs are flagged by the reviewer for two weeks in a row, reviewed every Friday.”

Consequently, the build conversation stops being about which platform to buy. It becomes a conversation about which decision is worth automating. That single shift is what moves a pilot from demo-stage to production.

What to Do This Week

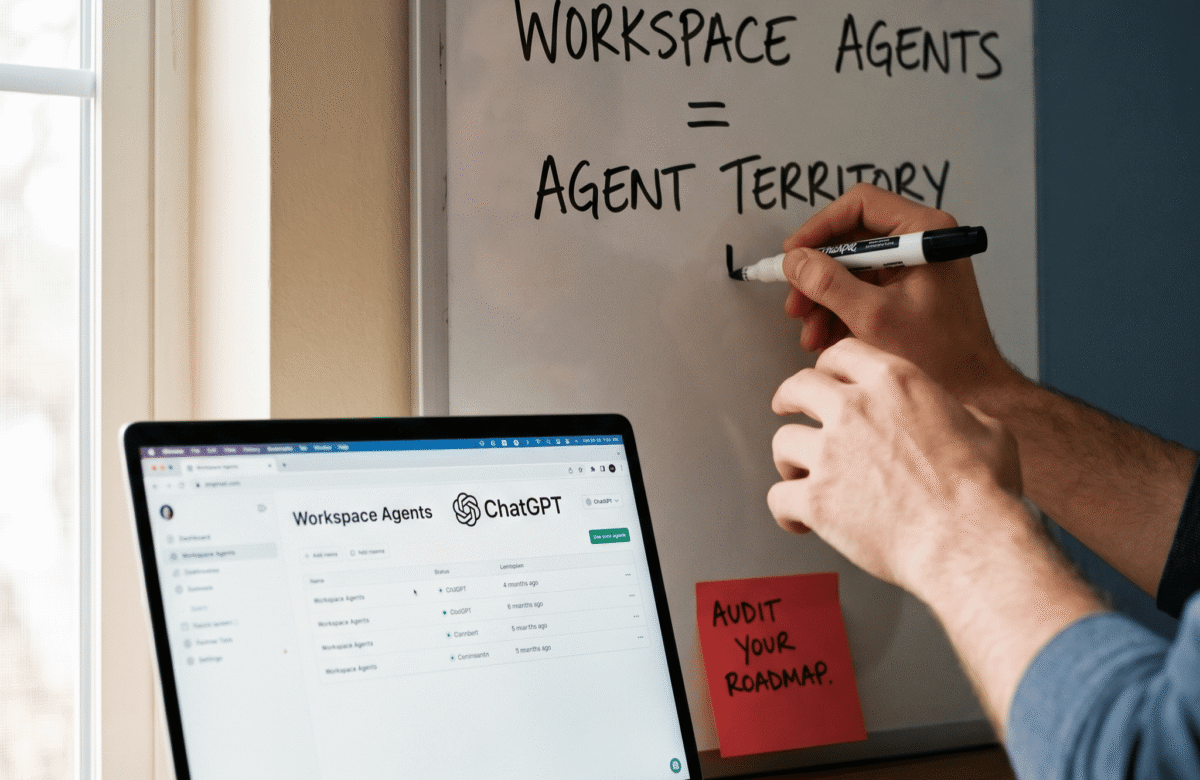

Pick one AI pilot currently running in the business. Open a clean document. Write four headings: Owner, Input, Decision, Kill Switch. Spend 10 minutes on each. The gaps will be obvious by the end of the hour.

Furthermore, if more than one of the four is blank, the pilot is not ready to scale. Fix the gap. Do not move to the next platform, the next model, or the next vendor conversation until the four answers are on paper.

In addition, this framework works equally well for agents inside product teams, GTM stacks, or back-office automation. The principle is the same. Clarity about ownership, inputs, decisions, and shutdown conditions is what separates the 12 percent of agents that reach production from the 88 percent that die quietly.

To walk through the framework against a live pilot, book a 15-minute call at calendly.com/ashikurrahaman/quick-intro or reach out at ashikur@lumeneze.com.