Table of Contents

The AI product scorecard is a ten-question self-audit that tells a founder in under ten minutes whether their product is a real agent or a feature wearing product clothes. It exists because buyers in 2026 are drawing a sharp line between products that finish work and features that start it. The founders on the right side of that line compound through the year. The ones on the wrong side renegotiate price every quarter.

This guide walks through the full scorecard, shows a before and after case study, and explains how the scorecard changes pricing, positioning, and renewal conversations. Use it as a live audit, not a read-through.

Why the Scorecard Matters in 2026

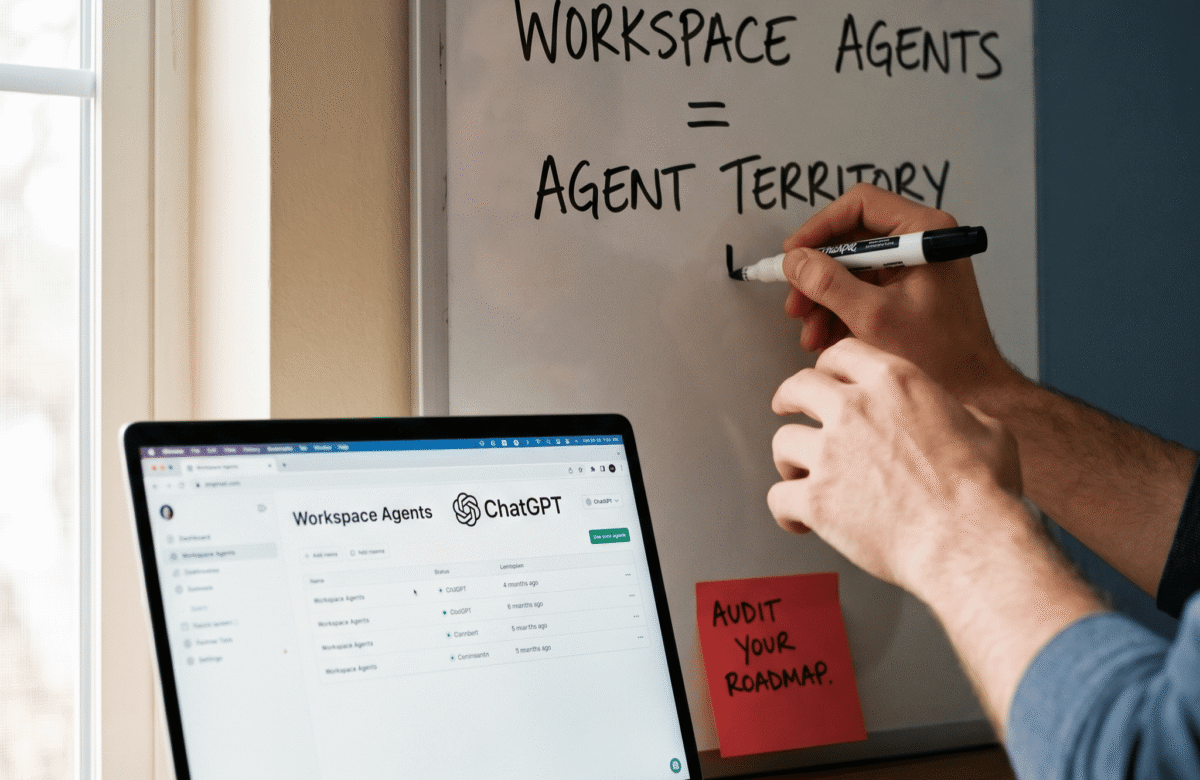

The category is splitting in public. On one side are feature products that add a generative panel or a smart button to existing software. On the other side are agent products that own an entire workflow and report a measurable outcome to the buyer.

The proof is live. Intercom Fin is approaching $100M in annual recurring revenue, priced at $0.99 per resolved conversation, now handling 2 million customer issues per week at a 67% resolution rate. Fin is not a feature inside Intercom. Fin is the product. The customer pays for the work done, not for the seat occupied.

In contrast, thousands of B2B products still ship “AI-powered” buttons without redesigning the workflow. Buyers have started asking a harder question. What outcome does the AI produce, and how is it measured? A feature cannot answer that. A product can.

Furthermore, Gartner projects 40% of enterprise applications will embed task-specific AI agents by the end of 2026, up from less than 5% in 2025. That is an 8x expansion in twelve months. A clear scorecard helps founders find out now, rather than after a missed renewal, which side of that expansion their product sits on.

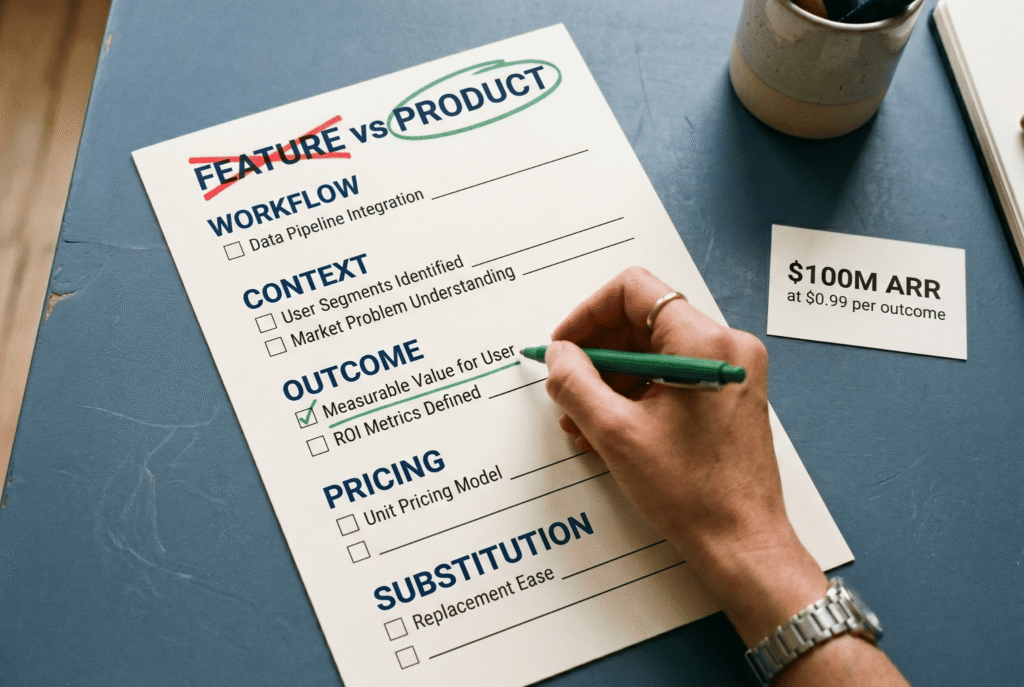

The 10-Question AI Product Scorecard

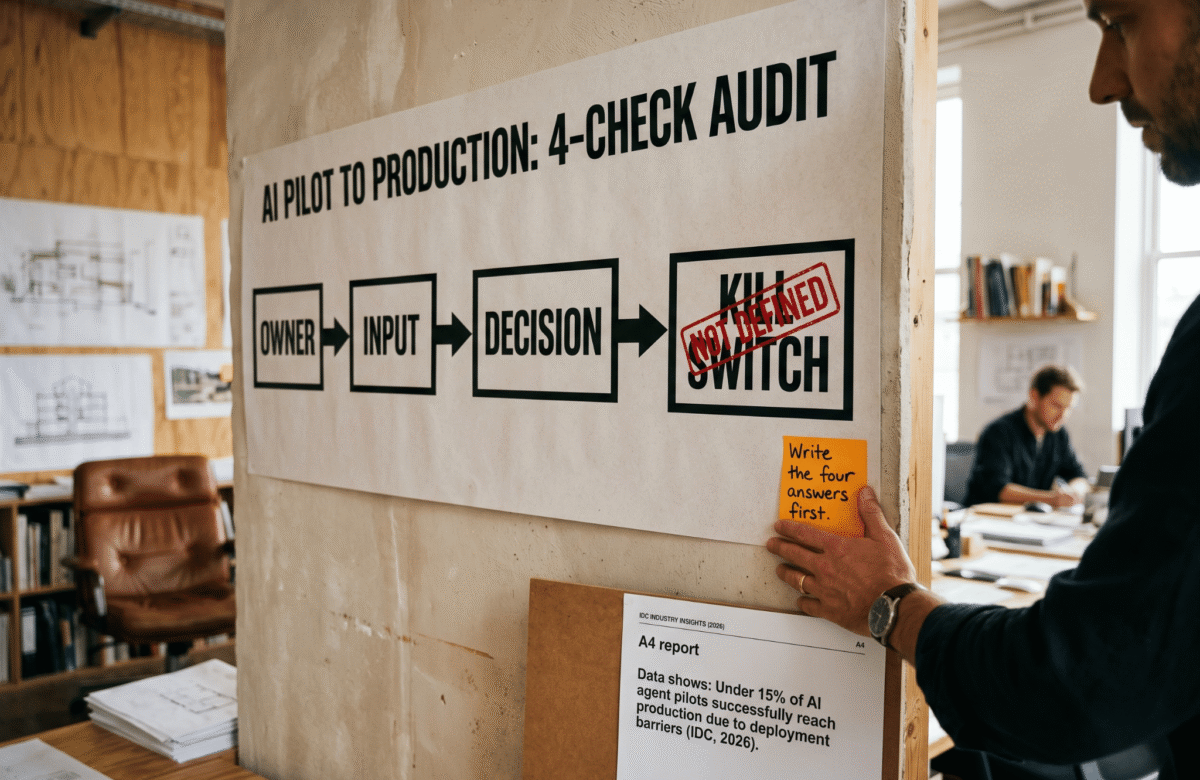

The AI product scorecard has five dimensions. Each dimension has two questions. Every yes answer scores one point. Zero to four points is a feature. Five to seven is a prototype. Eight to ten is a product.

Dimension 1. Workflow ownership.

Question 1. Does the agent finish the workflow end to end without the user assembling the output from fragments? Question 2. Can the user close the tab mid-task and return to a completed result rather than a draft waiting for review? A feature starts work and hands it back. An agent closes the loop.

Dimension 2. Context depth.

Question 3. Does the agent read context across connected systems, not just the prompt the user typed? Question 4. Does the agent retain memory across sessions so the user never has to re-brief it on basic account facts? A feature reacts to one input. An agent reads the full situation before acting.

Dimension 3. Outcome visibility.

Question 5. Does the product report business outcomes the buyer already tracks, such as tickets resolved, meetings booked, code shipped, or leads qualified? Question 6. Would the customer defend the renewal to their CFO using the product’s own dashboard? A feature produces output. An agent produces outcomes and attaches the proof.

Dimension 4. Pricing logic.

Question 7. Would a rational buyer pay per outcome delivered rather than per seat occupied? Question 8. Does the pricing model assume the agent does the work, not the human? A feature is priced by access. A product is priced by value delivered.

Dimension 5. Substitution test.

Question 9. Strip the AI label from the homepage. Does the value proposition still hold on its own? Question 10. Would the buyer still choose you over a well-designed seat-based tool that completes the same workflow? If the value collapses without the AI label, the AI is the marketing, not the product.

As a result, the scoring is honest and fast. Zero to four is a feature. Five to seven is a prototype that needs one more architecture decision. Eight to ten is a product that will still exist in twelve months.

Before and After: A Worked Example

For example, the scorecard is most useful when you can see a concrete before and after. Consider a mid-market B2B support platform that added an “AI-powered suggested reply” panel to every ticket in late 2025.

Before. The AI suggests replies, the human agent selects one, edits, and sends. The dashboard reports tokens consumed and suggestions accepted. Pricing stays at $75 per seat per month with an “AI add-on” of $20 per seat. Renewal conversations become price fights. Score on the scorecard, honestly: two out of ten.

After. The same team redesigns the product around a single workflow. The agent reads the ticket, the customer history, the order data, and the product docs. It drafts, sends, and closes tickets it can handle independently, escalating only what it cannot resolve. The dashboard reports tickets resolved, not tokens consumed. Pricing moves to a per-resolved-ticket model. Score on the scorecard: nine out of ten.

However, the market reaction is the part most founders miss. In the before state, expansion conversations required discounting and begging. In the after state, expansion happens automatically because customers want more resolutions, not more seats. This is the same pattern that took Intercom Fin from $1M to $100M in annual recurring revenue on a $0.99-per-outcome model.

For example, Cursor followed the same pattern on the engineering side. The product does not charge for access to an autocomplete feature. It charges for the work the agent ships into production code. Cursor crossed $300M ARR in late 2025 using that positioning. The AI product scorecard would score Cursor close to the ceiling.

How the Scorecard Resets Pricing

However, a low score on Dimension 4 is the most expensive gap on the scorecard. If the pricing model still assumes the human is doing the work, the product caps its own upside.

Therefore, the pricing reset is rarely about raising the number. It is about changing the unit. The unit stops being a seat and starts being a resolution, a meeting, a qualified lead, a report, a shipped pull request, an invoice collected, or a case closed. Whatever outcome the buyer already tracks, the product prices against directly.

For example, Intercom Fin charges $0.99 per resolved conversation. The pricing is transparent, outcome-anchored, and removes the negotiation friction that seat pricing creates. In contrast, a feature product charging $20 per seat for an “AI add-on” will always be one buyer review away from cancellation.

Consequently, a low score on the scorecard forces a bigger decision than adding a new feature. It forces a pricing-model change, a dashboard redesign, and sometimes a positioning rewrite. This is the real reason most founders avoid scoring their product honestly. The fix is not a sprint. The fix is a quarter.

Next Steps After the Scorecard

Once the scorecard reveals the honest score, the next move depends on where the gaps sit. A weak workflow ownership score means the core architecture needs rework. A weak outcome visibility score is usually the fastest fix, because a new dashboard can reframe the product without rewriting it.

In addition, the substitution test at the end of the scorecard doubles as a messaging audit. If the homepage stops making sense once the AI label is removed, the product positioning needs rewriting before the next launch. Buyers have learned to ignore AI adjectives. They read for workflow, outcome, and proof.

Therefore, at Lumeneze, we use this audit with founders to decide which workflow is worth fully owning, how the outcome gets measured on the buyer’s dashboard, and which pricing-model change should follow. A 20-minute strategy call walks through every dimension against your current product and surfaces the single highest-value decision to make this quarter.

If you want the full AI product scorecard as a printable checklist with the scoring rubric and two full case studies, book a 15-minute intro call: calendly.com/ashikurrahaman/quick-intro. The checklist travels best when it is printed, shared with a co-founder, and scored together.

The category is rewriting itself this quarter. The AI feature era is visibly ending. The AI agent era is compounding in public. Which workflow does your product fully own today, and which outcome does it report by default?