Table of Contents

AI agent architecture is the single biggest predictor of whether your automation project ships or gets canceled. Gartner predicts that over 40 percent of agentic AI projects will be canceled by the end of 2027. The cause is not the model. The cause is the system underneath.

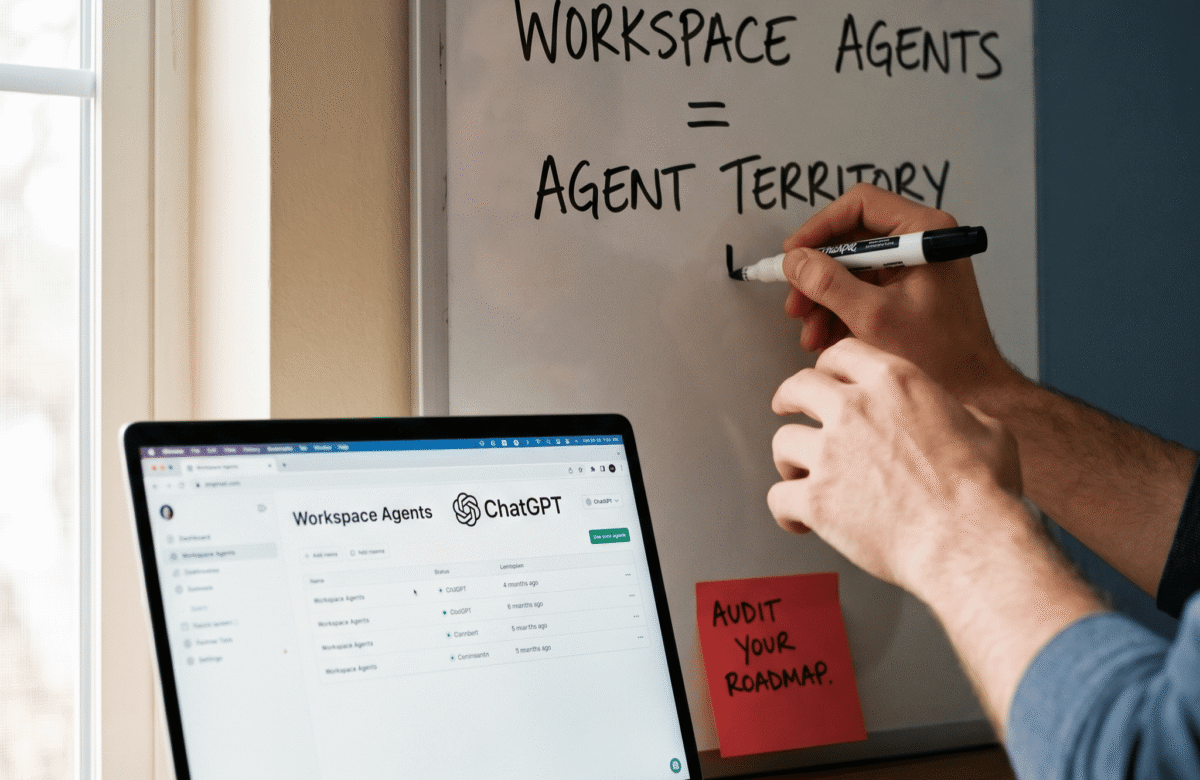

In April 2026, Salesforce launched Headless 360 to expose its entire platform as agent infrastructure. ServiceNow announced that every product it sells is now AI-enabled. Snowflake and OpenAI signed a 200 million dollar agentic AI partnership. The control plane war is loud and expensive. However, most founders are still asking the wrong question. They ask which agent platform to buy. They should ask which workflows are worth automating.

This guide covers the three architectural checks that separate the 60 percent of agent projects that ship from the 40 percent that get canceled.

Why 40 Percent of Agent Projects Fail

Gartner’s analysis of agentic AI failure identified four patterns that keep repeating across the market.

First, hype-driven deployment. Most agent projects are proof-of-concept experiments built because leadership wants an AI headline, not because the business case was modeled.

Second, agent washing. Gartner estimates that of the thousands of vendors claiming agentic AI capability, only about 130 have real agent functionality. The rest are chatbots, robotic process automation scripts, or AI assistants rebranded with new marketing.

Third, integration complexity. Agents that need to read and write across legacy systems often require custom connectors, identity work, and data cleanup that doubles the original project scope.

Fourth, automating broken workflows. This is the most common failure mode. As a result, companies deploy agents on top of processes that humans cannot execute cleanly. The agent then magnifies every missing rule, undefined edge case, and data gap.

For example, a company deploying an agent to handle inbound sales leads often discovers that its CRM data hygiene is the real blocker. The agent does not fix the hygiene problem. It inherits it, then sends 500 wrong emails before anyone notices.

The AI Agent Architecture Framework

Good AI agent architecture is not a tool stack. It is a sequence of decisions that resolve before any platform gets evaluated. At Lumeneze, we see this pattern with every client: the teams that ship agents that scale all run the same three checks before they pick a vendor. More on our approach at our growth systems practice.

The framework is three checks in order. Each check must pass before the next one begins. Skipping the sequence is the most common architectural mistake.

In addition, every check maps to a specific artifact the team produces and owns. Process documentation, role definitions, and observability specifications. Without these artifacts, the agent has no scaffolding and no way to be debugged when it drifts.

Therefore, the output of good AI agent architecture is not a platform choice. The output is a shipping plan with roles, inputs, outputs, metrics, and escalation paths defined.

Check 1: Process Clarity Before Platform Choice

The first check in any AI agent architecture is whether a human could execute the target process today, cleanly, with written rules.

This check sounds obvious and gets skipped constantly. Founders believe the agent will figure out the fuzzy parts. Current models cannot. Agents amplify structure and expose the absence of it.

For example, consider a startup deploying an agent to triage customer support tickets. If the support team has no documented routing rules, the agent invents its own rules on every ticket. The behavior drifts week over week, support quality swings, and the team blames the agent instead of the missing rulebook.

The artifact required is a process document that lists every step, decision point, input source, and output destination. If the document has more than three “it depends” branches without clear rules, the process is not ready for automation.

Check 2: Judgment vs Execution Separation

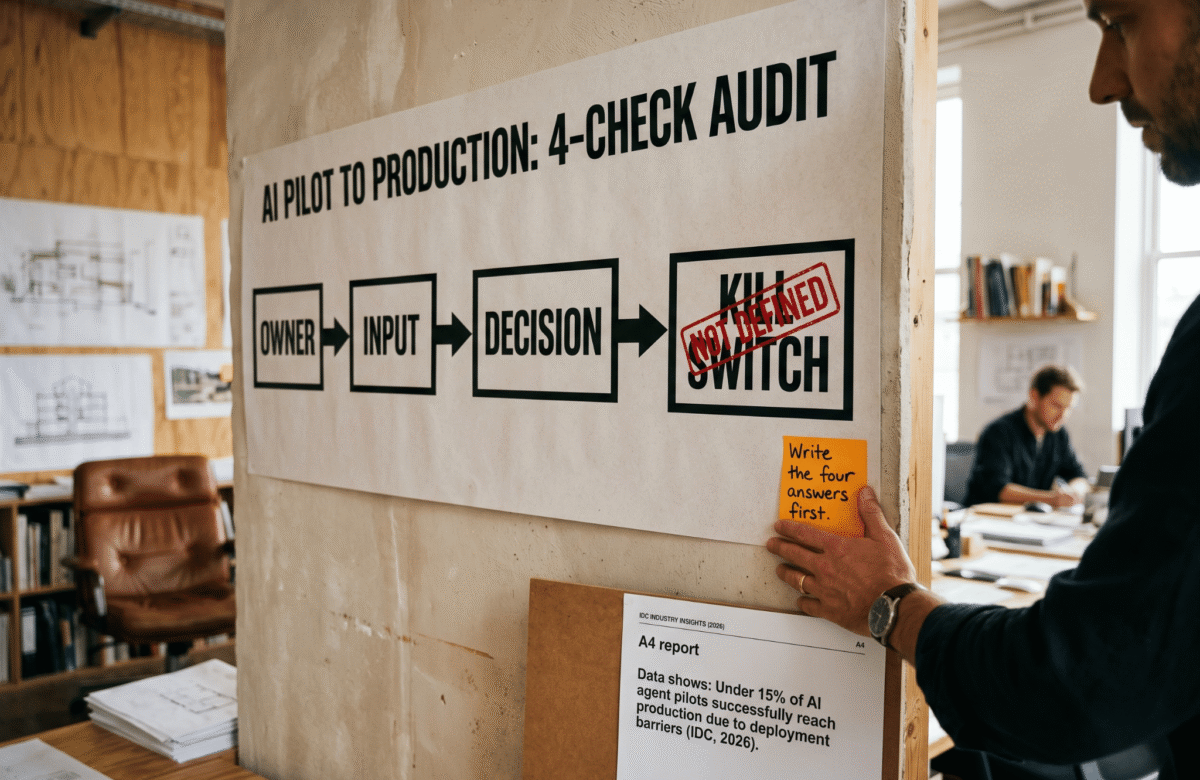

The second check is the boundary between what the agent executes and what a human decides. Good AI agent architecture draws this line before deployment, not after an incident.

Agents are strong at repeatable steps with structured inputs. They are weak at decisions that involve tradeoffs, ambiguity, or reputational risk. Conflating the two creates the pattern Gartner calls “automating judgment.”

For example, an agent can draft a response to a refund request. An agent should not approve a refund above a threshold, reply to a PR-sensitive complaint, or change a pricing rule. Those are judgment calls with asymmetric downside.

Furthermore, the handoff between execution and judgment should be explicit. The agent flags the case, the human sees the flag, the human decides, and the system logs the decision. No implicit handoffs. No agents making judgment calls because nobody defined where execution ends.

The artifact required is a RACI-style document listing which tasks the agent owns end-to-end, which tasks require human approval, and which tasks are human-only.

Check 3: Observability From Day One

The third check is observability. Teams that ship agents that scale instrument them before deployment, not after. This is non-negotiable in any durable AI agent architecture.

Observability has three components. Input logs capture what the agent saw. Decision logs capture what the agent did and why. Outcome metrics capture whether the output produced the business result.

Consequently, when an agent misbehaves, the team can answer “where did this go wrong” in minutes instead of days. Without this, debugging becomes guesswork and teams lose confidence fast.

In addition, every agent needs a kill switch. A single command or toggle that shuts the agent off if outputs drift. Too many teams learn this the hard way after the agent sends thousands of wrong emails overnight.

The artifact required is a dashboard with at least five metrics: input volume, successful completions, human-flagged cases, outcome quality score, and error rate. Plus a kill switch with a documented owner.

What to Do This Week

If your team is evaluating agent platforms right now, pause the evaluation for five days and run the three checks instead.

Day 1 and 2: document the target process. Every step, input, output, and decision point. If the doc has gaps, the agent will have gaps.

Day 3: draft the execution-versus-judgment boundary. What does the agent own? What does the human own? Where is the handoff?

Day 4: specify observability. What logs, what metrics, what kill switch, what owner.

Day 5: only now evaluate platforms. With the artifacts from days one through four, platform selection becomes mechanical. The fit is obvious.

Therefore, strong AI agent architecture comes first, platform second. This is the difference between the 60 percent of agent projects that ship and the 40 percent Gartner expects to be canceled.

If your team is stuck on Check 1 or Check 2, a 15-minute call can usually unblock the next step. Book a time at calendly.com/ashikurrahaman/quick-intro and bring the workflow you are trying to automate.

Which check is hardest for your team right now, process clarity, judgment boundaries, or observability?