Table of Contents

AI search visibility is the new distribution category most B2B marketing teams still have no plan for. Adobe closed its annual Summit in Las Vegas on April 22, 2026. The keynote named Brand Visibility a first-class pillar of its new CX Enterprise platform. The signal is clear. The way buyers discover your company is fracturing across ChatGPT, Perplexity, Google AI Overviews, Claude, and Gemini. Traditional search is no longer the default path to a demo.

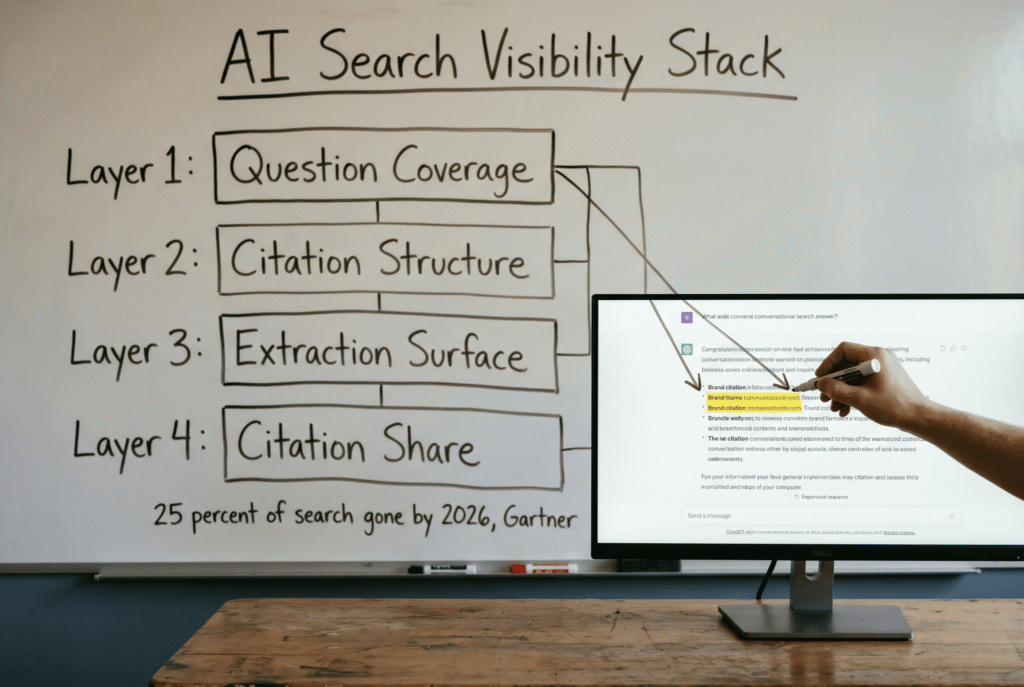

Gartner forecasts a 25 percent drop in traditional search volume this year. By 2028, the same forecast hits 50 percent as users shift to AI-driven search interfaces. However, fewer than 12 percent of marketing teams have a documented plan to show up in those answer engines. The gap is enormous. For the teams that close it first, it compounds into a distribution moat that paid channels cannot match.

Why AI search visibility matters now

Three numbers define the shift. ChatGPT now serves roughly 883 million monthly users. Google AI Overviews appear in approximately 55 percent of searches. Traffic that used to land on your site is increasingly handled by the AI engine itself, with your brand either cited or invisible.

As a result, the unit of distribution is no longer the blue link. It is the citation. Buyers read the AI answer, see your brand named once or twice, and move straight to evaluation. If your content is not in that answer, your pipeline quietly leaks.

For example, a B2B buyer researching “best customer journey orchestration platform” may now ask Perplexity rather than Google. The vendors named in the first answer frame the shortlist. Vendors not named do not make the next round, regardless of search rank.

What Adobe Summit 2026 signaled

Adobe retired its Experience Cloud brand and launched CX Enterprise at Summit 2026. The new architecture is AI-first and organized around three pillars. Those pillars are Brand Visibility, Customer Engagement, and Content Supply Chain. Underneath sits the Adobe AI Platform with two intelligence systems that govern brand consistency and optimization across AI-powered channels.

Furthermore, Adobe moved more than ten purpose-built AI agents from preview into production. Over 1,770 enterprise customers are already entitled to use them under a credit-based pricing model. The move matters beyond Adobe. It tells every operator that AI visibility is now a revenue system, not a content tactic.

Therefore, the lesson for operators is not that you need Adobe. The lesson is that the category is real. AI search visibility will be measured, budgeted, and competed on in 2026 and 2027. The teams who wait will find themselves priced out of their own category.

The four-layer AI search visibility framework

However, most teams attempt AI search visibility as a content refresh. It fails because the problem is architectural, not cosmetic. The framework below is the one we use when advising early-stage B2B startups rebuilding for AI-first discovery.

Layer 1: Question coverage. Pull the top 20 to 50 questions your ICP asks AI engines about your category. Use ChatGPT sessions, Perplexity and Gemini logs, sales call transcripts, and support tickets. Turn each question into a dedicated page, not a blog post buried under a tag page.

Layer 2: Citation-worthy structure. Every page must answer the question in the first 150 words. Include named sources, real statistics, and direct quotations. Princeton research on Generative Engine Optimization proves the lift. Citation rate improves by 30 to 40 percent compared to unoptimized content.

Layer 3: Extraction surface. Clean HTML, schema markup, clear entity references, and strong internal linking so LLMs can parse your content without ambiguity. Fragmented navigation and JavaScript-heavy rendering are the two biggest killers of AI extraction today.

Layer 4: Citation share measurement. Ranking share is a legacy metric. The new metric is how often your brand is cited inside AI answers for your target queries. Several tools track this now, but even a simple internal prompt-and-log process works for small teams.

How to measure AI search visibility

Track three metrics every week. First, citation share, meaning the percentage of AI answers to your target queries that mention your brand at all. Second, citation position, meaning whether your brand is the first, second, or third citation. Third, citation context, meaning whether the mention frames you as the leader, a peer, or a footnote.

In addition, track AI-referred site traffic separately from organic. The referer strings from Perplexity, ChatGPT, and Claude are growing each month. Treat them as a distinct channel inside your analytics, not a subset of organic search.

Consequently, your dashboard needs a citation share line alongside your traditional ranking share line. Within two quarters, the citation line will forecast pipeline better than the ranking line.

Building AI search visibility in 90 days

A pragmatic 90-day build looks like this. Days 1 to 15: question coverage audit plus a citation share baseline. Days 16 to 45: rebuild 20 priority pages with citations, stats, and schema. Days 46 to 75: expand to 40 pages and add prompt-based monitoring. Days 76 to 90: measure citation share lift and double down on the top 10 converting queries.

Furthermore, teams that finish a 90-day sprint before Q4 2026 will enter 2027 with a defensible AI search visibility position. The teams that wait will compete against incumbents whose content is already cited by every major AI engine.

For a second pair of eyes on your AI search visibility plan, we build these systems for early-stage B2B founders at Lumeneze. Book a 15-minute call to map your first 90 days: calendly.com/ashikurrahaman/quick-intro.

References: Adobe Summit 2026 announcements. Gartner forecasts on AI search and organic traffic. Princeton research on Generative Engine Optimization, Aggarwal et al.