Table of Contents

Building a solid AI agent product strategy has moved from optional to essential for any B2B SaaS founder operating in 2026. The systems that drove product-led growth for the last decade were built around a clear assumption: the user is human. That assumption is breaking. And most product teams have not caught up.

76% of organizations are now deploying or actively implementing agentic AI, according to recent enterprise research. A growing share of those agents are not internal tools. They are your users. They are activating your product, querying your API, and generating usage signals at volumes no human user could sustain. The founders who redesign their activation and retention logic around this reality early will have a structural advantage. The ones who do not will keep optimizing for a user base that shrinks as a share of actual product traffic every quarter.

Why PLG Was Built for Human Users

Product-led growth emerged as a response to high customer acquisition costs and the reality that users could evaluate and adopt software without a sales team in the loop. The core playbook was built around observable human behavior: time to first value, the wow moment, feature discovery curves, session frequency, UI engagement depth, and seat expansion as a proxy for adoption.

These are not arbitrary metrics. They were derived from studying how human users actually moved through software. A person clicks around, gets confused, finds a useful feature, builds a habit, and eventually pays or expands their usage. The product team’s job was to shorten every step of that journey.

The problem is that AI agents do not move through software the way humans do. They do not discover features by exploring a UI. They do not experience a wow moment. They do not need onboarding tooltips. They read your documentation, query your API, and either succeed or fail based on the structural quality of your integration surface. If the PLG system running your product was designed for human-paced discovery, agents bypass your entire engagement layer.

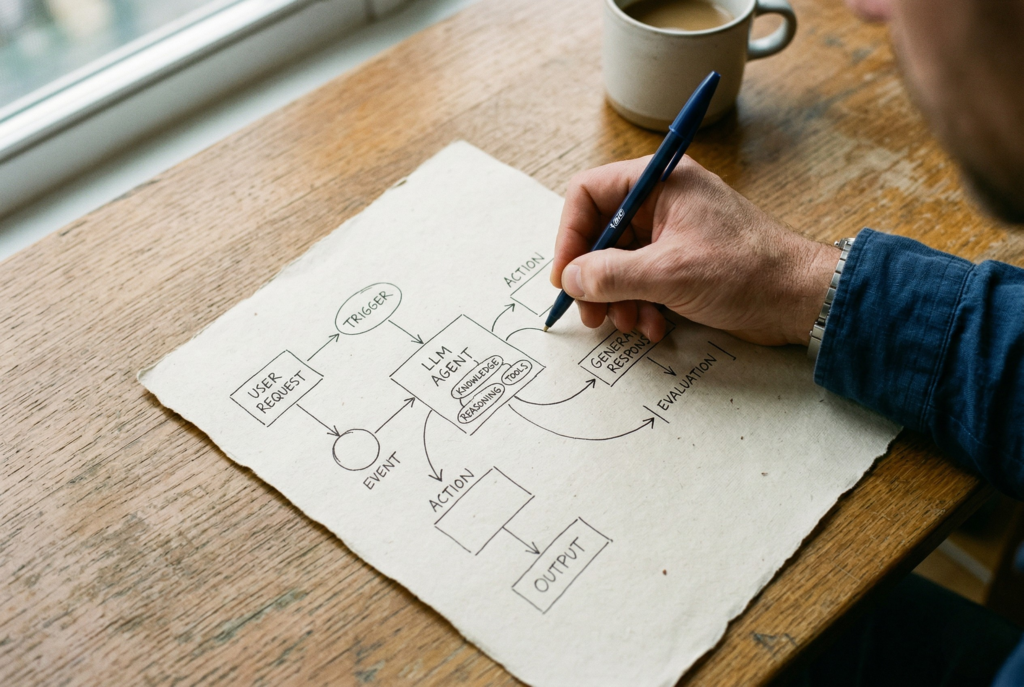

How AI Agents Actually Use Your Product

Understanding the real behavior pattern of an AI agent inside your product is the starting point for any serious AI agent product strategy. Here is what it looks like in practice.

Agents activate through API calls, not UI clicks. When an agent integrates with your product, its first action is typically a structured request against your API or a documented workflow endpoint. There is no onboarding flow, no welcome modal, no tutorial. There is a call, and either it works or it does not.

Agents measure success through automated outcomes, not engagement streaks. A human user might spend 20 minutes in your product per day, signaling healthy engagement. An agent might generate 10,000 API calls in an overnight batch job and then go quiet for 72 hours. Measuring retention through session time or login frequency tells you nothing useful about that agent’s dependence on your product.

Agents stop using your product because of structural failures, not poor UX. Churn for human users often traces back to confusion, competitive alternatives, or unmet expectations. Agent churn traces back to API instability, documentation gaps, rate limit policies, or authentication complexity. These are completely different retention problems requiring completely different interventions.

AI Agent Product Strategy: Redefining Activation

The most important shift in an AI agent product strategy is the redefinition of activation. For most product teams, activation is currently defined as something like: user completes the core action in the UI within the first session. For agents, that definition is structurally broken.

Here is how activation needs to be redefined:

- Human activation: User completes first core action in UI, reaches wow moment, begins building a usage habit.

- Agent activation: Agent completes first successful automated workflow, meaning an API call that returns expected output and generates a downstream result in the agent’s system.

This reframing has direct implications for your onboarding investment. The documentation quality and API response clarity become your primary onboarding channels. The time and engineering effort that currently goes into UI onboarding flows, tooltips, and in-product walkthroughs has diminishing returns for an agent-first user base. The investment that matters is in structured API documentation, clear error messaging, and sandbox environments that let agents test integrations before going to production.

This is not an argument to eliminate human-centered UX. If your product has both human and agent users, both activation paths need to be maintained. The mistake is designing only for humans and assuming agents will follow the same path.

Rebuilding Retention and Expansion Signals

Retention and expansion metrics need the same reconstruction. The signals that indicate a healthy, retained agent user are categorically different from what a healthy human user looks like in your analytics.

Retention signals for agent users

- Query volume consistency over time, not session frequency. An agent making consistent calls across weeks is retained. One that spikes and disappears is at risk.

- Error rate trends. Rising error rates signal that something in your API or their integration is breaking. This is a leading churn indicator for agents.

- Automated outcome success rate. Are agents completing the downstream tasks they integrated with you to accomplish? Track this at the workflow level, not just the API call level.

Expansion signals for agent users

- Workflow breadth, not seat count. An agent expanding its use of your product shows up as more distinct workflow types being executed, not additional seats licensed.

- Usage volume thresholds. With usage-based pricing becoming standard, expansion is now a volume question. Are the automated tasks growing? Is throughput increasing?

- New integration endpoints touched. An agent beginning to use a second or third API endpoint it did not initially integrate with is an expansion signal equivalent to a human discovering a new feature.

These signals require different instrumentation than standard product analytics. Most analytics tools are built around human events: sessions, clicks, page views, feature flags. You need event tracking at the API layer that captures workflow-level outcomes, not just request counts.

What to Do Right Now

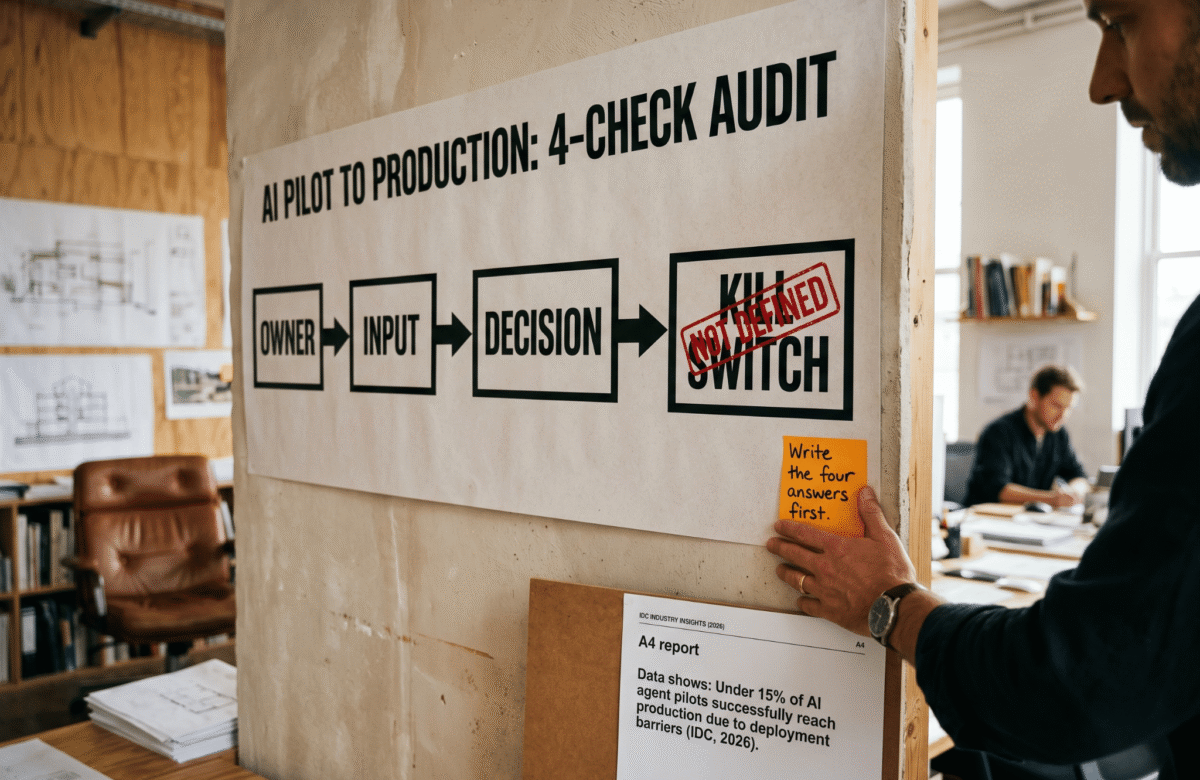

Building an effective AI agent product strategy does not require rebuilding your entire product. It requires a targeted audit and three structural updates.

- Audit your current user base for agent traffic. Check your API logs for non-human usage patterns. Batch jobs, high-frequency off-hours calls, and structured request patterns without associated UI sessions are indicators. You may already have significant agent usage that your current metrics are not surfacing correctly.

- Rewrite your activation definition. Document what agent activation looks like in your specific product. Define the first successful automated outcome. Make this an official product metric alongside your existing human activation metric.

- Invest in API and documentation quality as a first-class onboarding channel. This means structured API references, working code examples, clear error codes with recovery instructions, and sandbox environments. Treat this with the same urgency you would treat a broken onboarding flow for human users.

The broader shift toward usage-based pricing, documented by SaaS researchers and ProductLed’s 2026 PLG predictions, is directly downstream of this change. Pricing models follow usage patterns. As agent usage grows, seat-based pricing becomes a worse proxy for value delivered, and the market moves to align pricing with actual automated outcomes.

For more on how Lumeneze approaches product strategy for early-stage B2B founders, see our growth systems services and our work on product-led growth architecture.

Conclusion

The AI agent product strategy shift is not coming. It is already inside your product. Agents are activating your platform, generating your usage signals, and driving your retention metrics in ways your current analytics were not built to surface accurately.

The founders who recognize this early and rebuild their activation definition, retention signals, and expansion model for an agent-first user base will have product metrics that reflect the actual market they are operating in. That clarity compounds. Better metrics lead to better roadmap decisions. Better roadmap decisions lead to better retention. Better retention leads to more predictable growth.

The ones who do not update the model will keep optimizing for a user profile that represents a smaller share of actual usage every quarter, while wondering why their growth is harder than the numbers suggest it should be.

If you are building or advising B2B SaaS products and want to think through what an agent-first product strategy looks like for your specific context, book a quick call with Lumeneze. We work with early-stage founders on exactly this: redefining growth systems before the old model causes real damage.